To enable data-driven discussions about GenAI in investigations, we are organizing a 4-week, vendor-agnostic challenge with a panel of judges (AI advocates and skeptics), public voting, and sharing all of the results.

The goal here is not to promote or bash any single product or LLM. It’s to share what currently works and what doesn’t.

The basic concept is:

- You submit SANITIZED screen shots of where GenAI was amazing, where it went bad, and where you’re not sure it helped or hurt.

- A panel of industry judges will review for the top 5 amazing ones and the top 5 disasters.

- The public will vote on the final winners.

- The winners get bragging rights!

The judges include (alphabetical by last name):

- Heather Barnhart (SANS) – LinkedIn

- Alexis Brignoni (LEAPPS) – LinkedIn

- Eric Capuano (Digital Defense Institute) – LinkedIn

- Brian Carrier (Sleuth Kit Labs – Organizer) – LinkedIn

- Filip Stojkovski (BlinkOps) – LinkedIn

Submissions:

- Submissions are due by May 25, 2026 11:59PM EST.

- The form is here: https://tally.so/r/vG0rrQ

Submit any questions to The Sleuth Kit Forum.

Submission Requirements

The goal here is for honest and well-intentioned submissions from practitioners using data from:

- Actual investigations

- CTFs

- Course data sets

- Realistic test data

Vendors can submit results from their own tools, but they need to:

- Disclose they are a vendor

- Use a publicly available CTF or other data set (no hand crafted demo data….)

Example public data sources include:

- https://arsenalrecon.com/insights/publicly-accessible-disk-images-grid-for-dfir

- https://ctf.null404.org

- https://cfreds.nist.gov/

Submissions will include:

- Context of the data

- What you prompted

- Screenshots of the results

- Why do you think it’s amazing, a disaster, or a snooze-fest?

- Infrastructure details

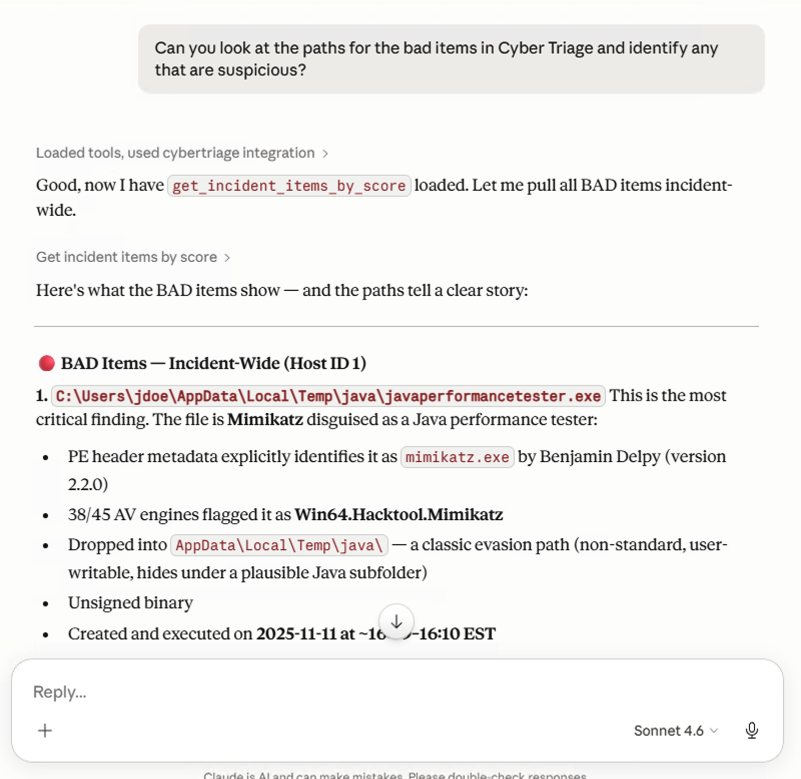

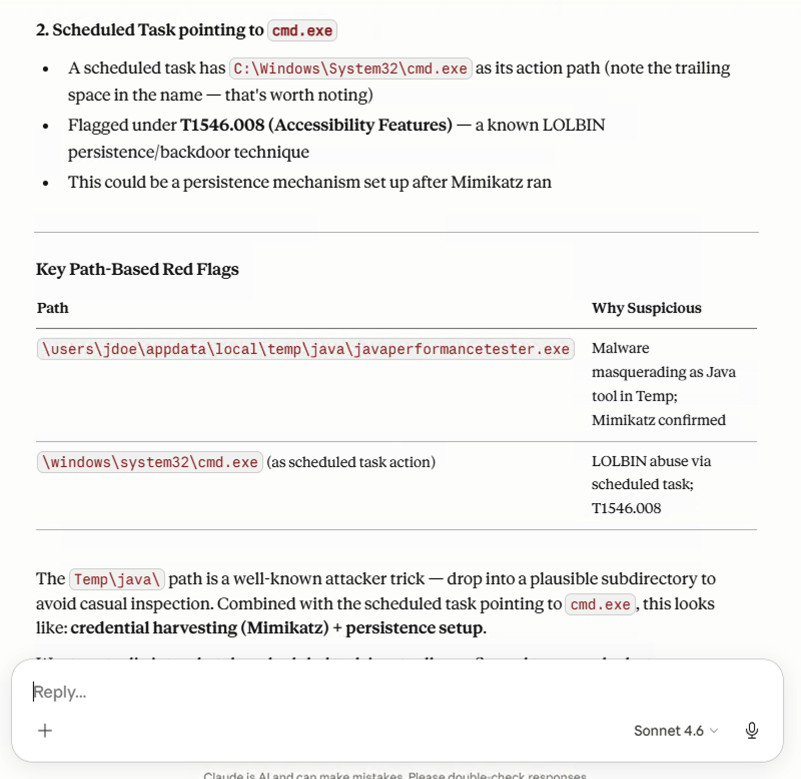

Here’s an example of using Claude Desktop with the Cyber Triage MCP server and the eval data set. It required two screen shots. I’d rank this is mixed results on the value of the GenAI.

Screenshot 1:

Screenshot 2:

Voting Process

Submissions will be reviewed by a panel of judges that include AI skeptics and advocates.

The criteria will include:

- Clarity: Is it obvious from the screenshot + context what happened? Can someone learn from it without needing a 10-minute explanation?

- Significance: Did the result provide either a much faster result or a novel finding? Or a really dangerous finding?

- Realistic: Is the data set realistic or is it a bit esoteric?

- Teachability: Would this make someone better at using (or being skeptical of) GenAI in their workflow? Is there a takeaway from it?

Requirements to Win

- Submit all of the info on the form (screen shots, context, etc)

- Include your email so that we can verify it’s a real submission. We won’t publish this though and results can be posted anonymously.

Schedule

- April 27: challenge starts

- May 25: submissions are due

- June 8: Public voting begins

- June 15: Public voting ends

- June 18: Winners are announced

Getting Started

If you are new to GenAI and investigations, here are some starting links: