Continuing on our Cyber Triage in the Cloud series, we’re going to look into the Google Compute Platform (GCP) and what it offers as a DFIR cloud platform. This post will be useful if you are already a GCP customer or if you are looking to move your work to the cloud.

We are going to talk about database options that can be used with Cyber Triage and how to store artifact uploads from your customers. The database options are also applicable to Autopsy, since it shares the same PostgreSQL database as Cyber Triage Team.

Spoiler Alert: Google is cheaper and faster than AWS in our tests.

2025 Update: Since releasing this post and helping our customers, we now recommend that you always use a PostgreSQL database on the same VM as the Cyber Triage Server. We see many performance issues when using the cloud native databases.

Benefits of DFIR in The Cloud

First, let’s review the basics of why DFIR labs are moving to the cloud. Namely:

- Scaling: It can be easier to set up Server VMs and expand storage as needed.

- Access: For consultants and MSSPs, a cloud is a central place that doesn’t require either party to give the other VPN access to their internal network. The cloud is also an easier place for responders to get access to when they are traveling or working remotely.

- Maintenance: If you use could-provided services, then you will likely not need to worry about updating the software and backups.

GCP Database Options

On GCP, there are currently (but it’s changing soon) two options to run a PostgreSQL database.

- Cloud SQL: With this option, Google will run and manage a PostgreSQL database for you. They will handle servers, fail overs, storage, etc. For those who read the AWS post, this is most similar to AWS Relational Database Service (RDS).

- Manual Install: You can launch a Windows VM and install PostgreSQL on it. It will be up to you to update and scale the database.

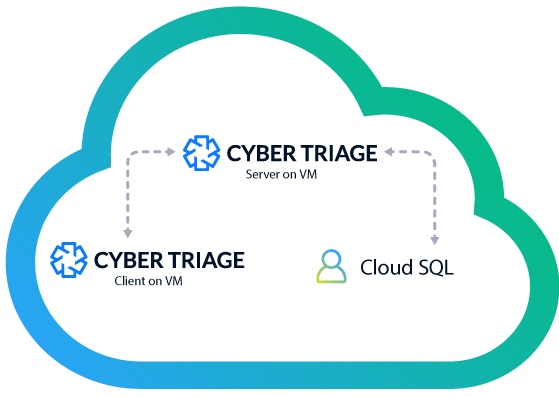

Here’s an example Cyber Triage deployment with Cloud SQL.

It was just announced that there could be a 3rd option when a PostgreSQL interface for Spanner is released. Spanner is Google’s cloud native relational database, kind of like AWS Aurora. This will look like PostgreSQL, but not actually be storing data in the same way PostgreSQL does. We have not used this yet (it is not officially released), so we are unclear how it will compare with Cloud SQL.

Picking a GCP Database for Incident Response

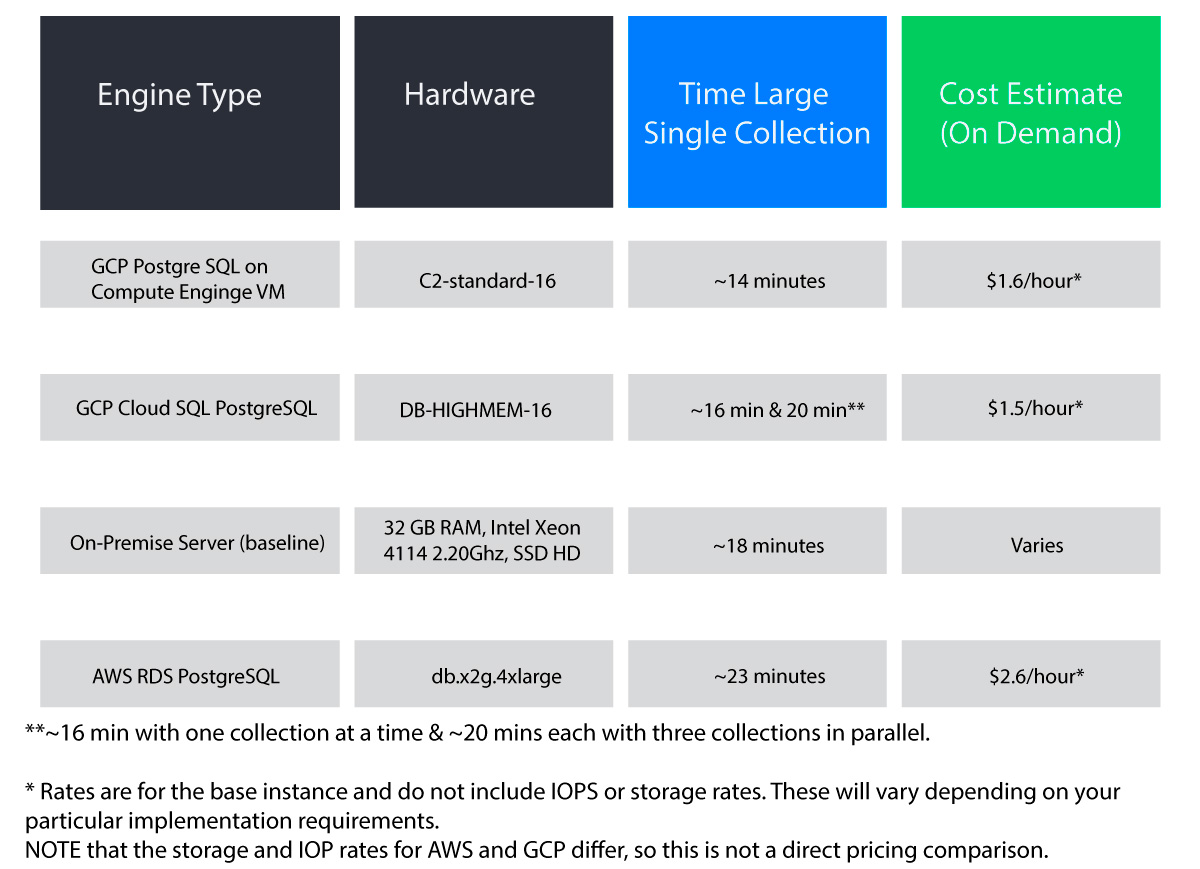

Like we did for AWS, we did some basic testing and cost profiling. We performed two tests per configuration. One was to time how long it takes to ingest a single copy of a large test collection (2.5GB). The second was to time how long it takes to add 50 copies of that collection with three being processed in parallel.

Note that we did not test all memory and CPU options. The goal here is to provide some basic direction on pros and cons of each. For reference, we’ve included a set of AWS numbers as well. Note that it is hard to compare cloud platform prices because they each factor in different metrics.

Here are the 2021 results, ordered by processing time.

Our April 2025 recommendations are:

- Use PostgreSQL installed on the same VM as the Cyber Triage Server.

- Cloud-native databases do not perform as well.

- If you are not yet tied to a cloud platform, then GCP seems to be cheaper and faster than AWS.

Cyber Triage Workflow For Google Cloud Forensics

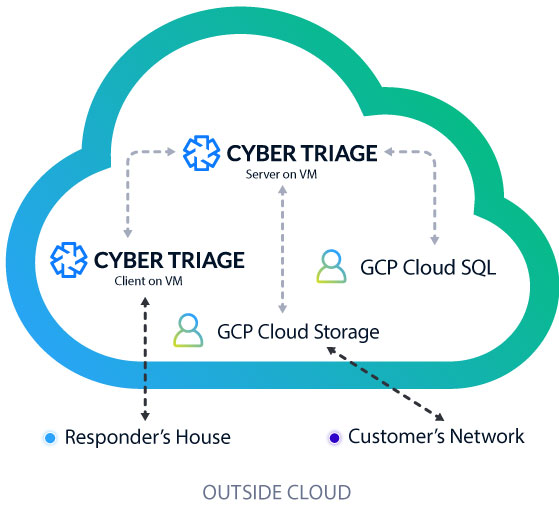

Here’s an example setup of using Cyber Triage in GCP:

- A customer runs the Cyber Triage collection tool on a suspect host in their network and uploads the artifacts to a GCP Cloud Storage bucket.

- The responder logs in from home and imports the data into the Cyber Triage Server.

- Results are saved to the GCP Cloud SQL database.

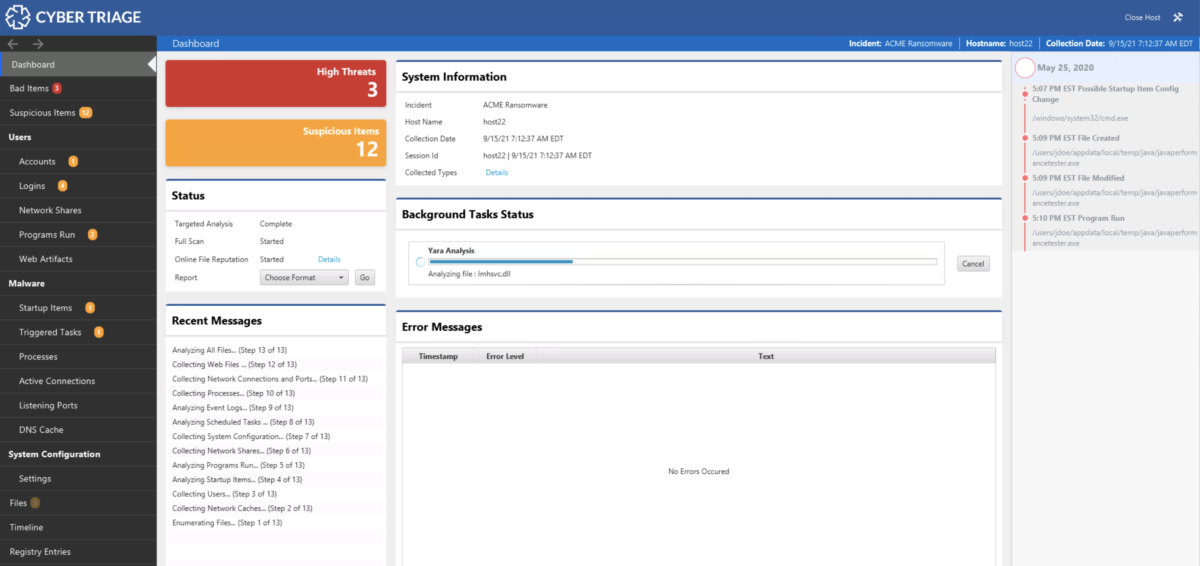

- The Cyber Triage Server will analyze all of the artifacts and assign scores.

- Other examiners can login and assist as needed.

As a reminder, we highly recommend that if you are going to use Cyber Triage in the cloud, that all parts of it are in the cloud. Cyber Triage was not designed to be public IP facing and it assumes that there is a fast connection between the Cyber Triage client and server.

How to Setup Cloud SQL with Cyber Triage

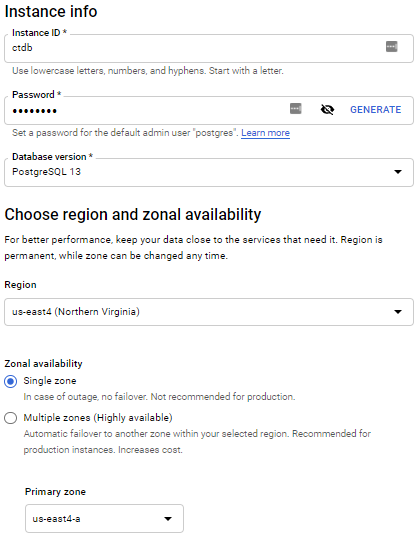

Setting up Cloud SQL is very easy. From the GCP dashboard, select SQL and “Create Instance”. Other settings you’ll want include:

- Storage Type: SSD

- Storage Capacity: 100GB and enable automatic increases

- Connections: Only allow private connections

Give it a hostname and admin password.

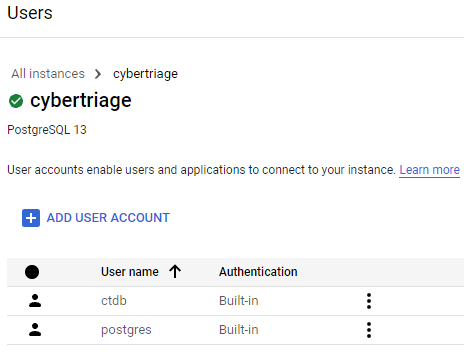

Once the database is setup, create a non-admin user that Cyber Triage will use:

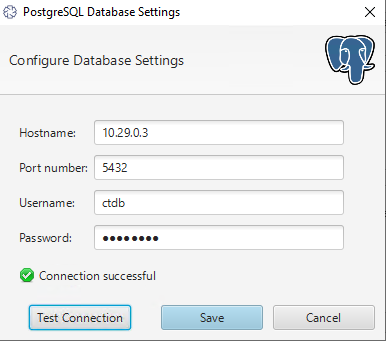

The final step is to configure Cyber Triage Server to use this database. You’ll need the private IP of the database server, and the username that you just created.

How To Setup Cloud Storage

We’ve mentioned in the past that the Cyber Triage Collection tool can upload to S3 buckets. Google’s Cloud Storage has an S3 compatible API that the collection tool can upload to.

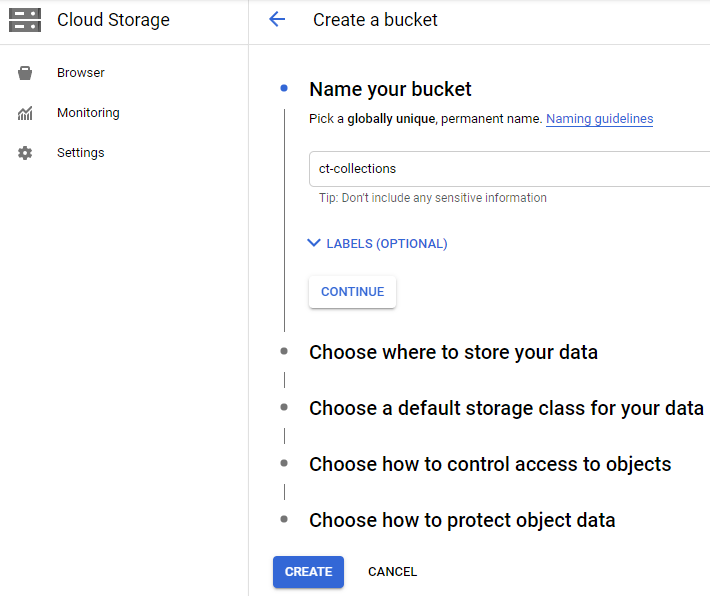

To set it up, go to the GCP Dashboard and choose Cloud Storage and make a bucket. Keep track of Access Key because you’ll need them to configure the Cyber Triage Collection tool.

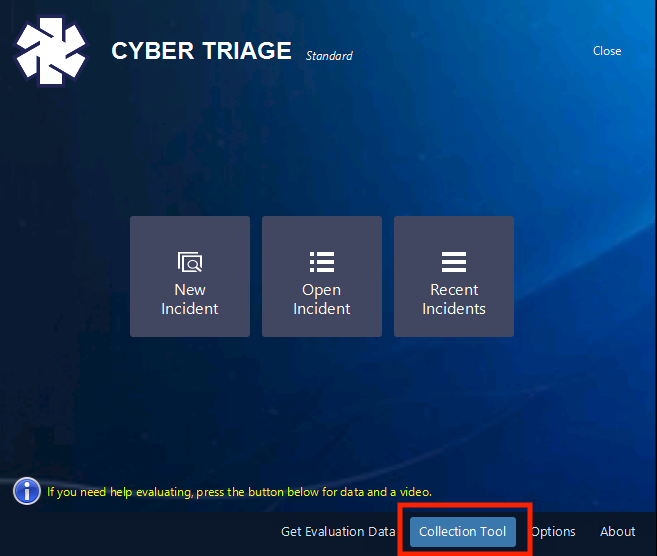

To configure the Collection Tool to use the bucket, choose “Collection Tool” from the opening window.

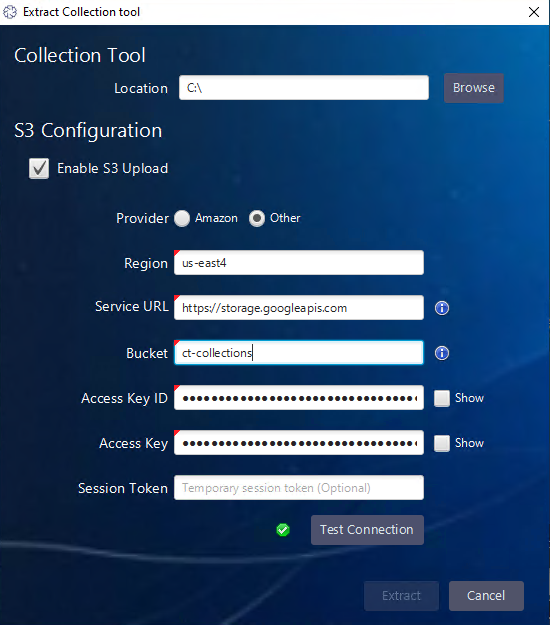

Then check the “Enable S3 Upload” option. Enter in the region, service URL, and bucket name from the one you created in the GCP dashboard.

Once you’ve extracted the collection tool with the bucket configuration, then the command line or its corresponding GUI will upload the collected artifacts to GCP Cloud Storage.

Conclusion

Google offers competitive cloud offerings that Cyber Triage can work well in. If you are looking to offload the management of your database and are running in the cloud, then Google could be a good platform. If you want to try Cyber Triage in either your cloud network or on your laptop, then fill out this form here.